Sony has this week launched a new flagship TV in a London event, confirming months of rumours and speculations that have been rife since CES 2016. Known as the Bravia ZD9 series, the 4K HDR LED LCD will take its rightful mantle as Sony’s top-end consumer TV for the rest of the year, even above the outstanding XD94 (which we’ve reviewed here). Three screen sizes are available, namely the 65-inch KD-65ZD9, the 75-inch KD-75ZD9 and a mammoth 100-inch KD-100ZD9.

In a hardly surprising move for Sony, the company has decided to keep mum on the peak brightness and the number of FALD (full array local dimming) zones, only going so far as to state that the new Z series “will be brighter than the XD94” and “will fulfil the Ultra HD Premium specifications”. Despite this, the Japanese manufacturer will not be seeking UHD Premium certification from the Ultra HD Alliance, in keeping with its current policy of adhering to its own “4K HDR” branding.

When asked specifically whether the ZD9 can hit 4000 nits, a company spokesperson coyly suggested that the figure only applied to the prototype unveiled at CES. Our best guess is that the Sony 65ZD9 (which has no fans behind the TV, and carries an energy efficiency rating of “B“) will do between 1500 and 2000 nits.

To put these peak luminance levels into perspective, the brightest 4K UHD (ultra high-definition) TV we’ve tested to date – Samsung’s UE65KS9500 (marketed as the KS9800 in the USA) – didn’t exceed 1500 nits; Panasonic’s DX902 reached 1300 nits; Sony’s own KD-75XD94 hit 800 nits; whereas the 2016 LG OLEDs generally range between 540 and 740 nits.

Those who claim that they don’t see the need for higher luminance because 300/ 500/ 600/ 800 nits on their TVs looked plenty bright are completely missing the point. To clear up some misconceptions, the high peak brightness is not applied to the entire screen in HDR, only to specular (for example, reflections off a shiny object) and highlight details (e.g. the sun and clouds on a bright day) to impart realism. The “average” brightness – Average Picture Level or APL in tech speak – shouldn’t be drastically different between SDR (standard dynamic range) and HDR (high dynamic range) material.

Now, why nits is so important in HDR is to do with how the ST.2084 standard has been defined. The PQ (perceptual quantization) EOTF (electro-optical transfer function) used in HDR10 (Ultra HD Blu-ray, Netflix and Amazon HDR) and Dolby Vision deals in absolute luminance, which means that in order to accurately reproduce the director’s artistic intent, 10 nits in the HDR-mastered content SHOULD be delivered as 10 nits on screen; 20 nits as 20 nits; 100 nits as 100 nits; and so on and so forth.

Here’s the problem: most 4K Blu-ray titles available to buy on the market at the moment are graded on 1000-nit mastering monitors, with a small handful mastered on 4000-nit Dolby Pulsars. When a 600-nit HDR TV is asked to display a 1000-nit HDR source video, there are three common ways this can be handled:

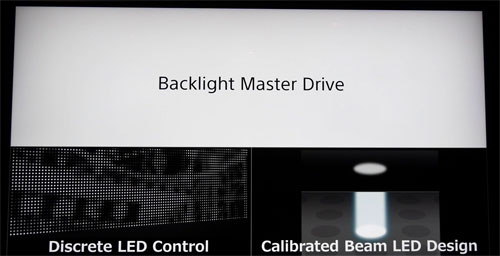

As you can see, this is why we’re so excited about the KD65ZD9, KD75ZD9 and KD100ZD9 which, if designed correctly (and there’s no reason to doubt Sony, given the Japanese brand’s excellent video processing track record), can faithfully deliver the director’s vision right in your living room without clipping or compressing. Sony has credited its improved “X1 Extreme” processor (40% more powerful than the existing X1 found on the XD93 and XD94) and “Backlight Master Drive” technology for the ZD9’s superior picture quality.

“Backlight Master Drive” comprises two key features, i.e. a discrete LED control that allows the illumination and dimming to be operated at a per-LED rather than zonal level; and a uniquely designed optical pathway that provides greater focus precision and reduces light leakage (and thus haloing/ blooming). Of course, Sony refused to be drawn on the number of zones (or LEDs, for that matter), but the result looked highly impressive in demos, although naturally we will wait until we get a Bravia Z Series review sample into our test room before passing judgement.

Other specs include a UHD resolution of 3840×2160, Motionflow XR 1200Hz processing, and active-shutter glasses (ASG) 3D functionality. There’s no Dolby Vision on board, and no TV can get software/ firmware-updated to become DV-capable since a hardware chip is required, but as we’ve discovered, a top-tier LED LCD can present HDR10 in a manner that’s not inferior to Dolby Vision, so it’s not a dealbreaker at all.

The Sony KD-65ZD9, KD-75ZD9 and KD-100ZD9 have been given list prices of £4,000, £7,000 and £60,000 respectively, with availability slated for September. The 65in KD65ZD9 is particularly attractive: it’s slightly pricier than the Samsung UE65KS9500, but boasts a flat panel and 3D support; and it’s a good £1,000 less expensive than the LG OLED65E6V whose 4K HDR presentation is somewhat hampered by limited peak brightness. And lest we forget, this is the first full-array local dimming LED TV from Sony that’s not 75 inches or larger to be released in the UK and Europe since 2011, which is surely cause for celebration…